For a decade, “liquid cooling” in the data center meant a copper cold plate bolted on top of a chip’s integrated heat spreader. That recipe has run out of room. The NVIDIA B200 ships with a 1,200 W TDP, the B300 raises that to roughly 1,400 W, and the Rubin and Rubin Ultra accelerators announced for the second half of 2026 push individual GPU power between 1,800 W and 2,300 W.

At those numbers the bottleneck is no longer the loop — it is every layer between the transistor and the coolant. Micro-channel cooling — a category that includes both micro-channel cold plates (MCCP) and on-die or in-package micro-channel structures — removes those layers by cutting microscopic flow paths into copper, silicon, or 3D-printed metal that sit directly on, or inside, the chip substrate. Microsoft, TSMC, NVIDIA partners and a long list of suppliers have all converged on the same conclusion in the last 12 months: this is the only thermal architecture that scales to 2026-class AI silicon.

This article walks through the four pillars editors and engineers ask about most — micro-channel geometry, the chip substrate as a heat exchanger, heat transfer efficiency, and pressure drop — and benchmarks the leading 2026 implementations against one another.

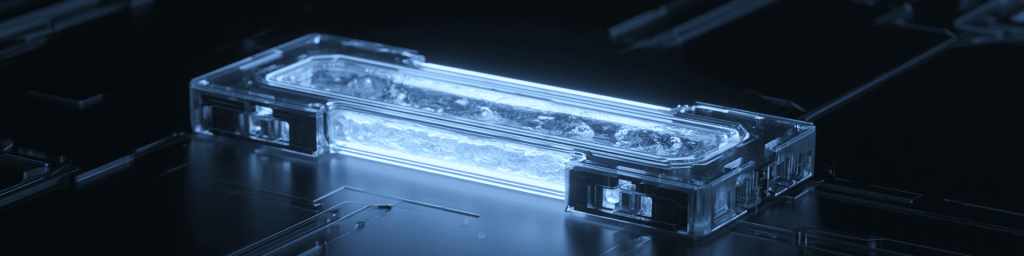

What is micro-channel cooling?

A “micro-channel” is generally defined in the heat-transfer literature as a flow passage with a hydraulic diameter between roughly 10 µm and 200 µm. At that scale the surface-area-to-volume ratio of the channel is one to two orders of magnitude higher than a conventional macro-channel, which is precisely why micro-channel heat sinks (MCHS) can dissipate 500–1,000 W/cm² of heat flux in real hardware.

Three families of micro-channel cooler now coexist in commercial roadmaps:

- Micro-channel cold plate (MCCP). A copper plate with sub-millimeter fins, mounted on top of a lidded package, plumbed into a facility loop. NVIDIA’s Vera Rubin NVL72 platform uses MCCP technology as its baseline.

- Direct-die / direct-substrate cold plate. The lid and TIM2 are removed; a micro-channel cold plate sits on the bare die or on the carrier substrate. Mikros Technologies and Jabil have publicly demonstrated this “chip-to-chiller” interface for NVIDIA AI systems.

- Integrated micro-channel cooling on silicon (IMC-Si) and in-chip microfluidics. Channels are etched into the back-side silicon of the die or into a silicon micro-cooler that is fusion-bonded to the chip. TSMC presented this on a 3.3X-reticle CoWoS-R package; Microsoft demonstrated a similar concept on a server running Teams workloads.

The trajectory is clear: each generation pulls the coolant a layer closer to the transistor.

The chip substrate as a heat exchanger

Traditionally the chip substrate — organic carrier, silicon interposer, or RDL fabric — was a purely electrical structure. In the IMC-Si approach the substrate (or the die back-side, which is structurally similar) becomes the heat exchanger itself. TSMC’s published work shows micro-pillar arrays etched into silicon and fusion-bonded to a CoWoS-R interposer that spans roughly 3,300 mm² of active area.

Two design choices matter here:

- Bonded vs. monolithic. A bonded silicon micro-cooler can be co-developed with the package, side-stepping the yield risk of etching channels through a finished compute die. Monolithic back-side channels remove an interface entirely but tighten the manufacturing tolerance.

- Pillar/ridge geometry. Peer-reviewed studies on silicon MCHS report measurable Nusselt-number gains from rib-and-cavity and wedge-angle designs — for example, a wedge angle near 15° improving the Nusselt number by roughly 1.7–3.6% with negligible friction-factor penalty.

Either way, the substrate is no longer “the part the cooler sits on” — it is the cooler.

Heat transfer efficiency: the numbers that matter

When chip-makers talk about heat transfer efficiency at this scale, three metrics dominate.

Junction-to-ambient thermal resistance (θ_JA)

TSMC’s Direct-to-Silicon Liquid Cooling demonstration on CoWoS-R reported θ_JA as low as 0.055 °C/W at a coolant flow rate of 40 ml/s, compared to 0.064 °C/W for a conventional lidded liquid-cooled package with TIMs — roughly a 15% reduction. The same platform sustained more than 2.6 kW TDP with a temperature delta below 63 °C.

Sustainable power density

IEEE-published IMC-Si results show a near-full-reticle die cooled at a uniform TDP of 2 kW (3.2 W/mm²) using 40 °C water, with hot-spot capability of 14.6 W/mm² over a 1 mm² region. When average TDP is reduced to 0.8 kW, hot-spot tolerance rises above 20 W/mm² at the same pumping cost.

Comparison vs. conventional cold plates

Microsoft’s lab-scale microfluidic prototype reported up to 3× better heat removal than cold plates depending on workload, and a 65% reduction in maximum silicon temperature rise inside a GPU.These figures are workload- and configuration-dependent, but the directionality is consistent across independent groups.

For AI infrastructure operators, the practical consequence is that 45 °C facility water — the design point NVIDIA confirmed at CES 2026 for next-generation systems — becomes feasible without throttling, slashing chiller load and lifting effective PUE.

Pressure drop: the other half of the equation

Heat-transfer wins are easy to advertise; pressure drop is the cost. Smaller channels mean higher friction, higher pumping power, and — if two-phase flow is involved — instability risk.

Three rules of thumb emerge from current literature and product disclosures:

- Pumping budget should stay below the heat-transfer benefit. TSMC’s IMC-Si demonstration kept required pumping power well under 10 W for a 2 kW die, preserving the PUE advantage and yielding ultra-low parasitic load.

- Geometry beats brute force. Two-phase pressure-drop studies in silicon micro-channel heat sinks (for instance, FC-72 work by Megahed et al.) consistently show that channel aspect ratio, manifold design, and inlet/outlet placement can move pressure drop by an order of magnitude before the heat-transfer coefficient changes meaningfully.

- Manifolds matter. Stanford and other groups have shown that an embedded 3D manifold above silicon micro-channels distributes flow vertically, shortening the in-channel path length and cutting pressure drop without sacrificing heat flux.

Designers therefore treat micro-channel cooling as a co-optimization problem: micro-channel geometry, manifold topology, coolant choice (water, dielectric, or two-phase), and chip floor-plan must be solved together.

The 2026 competitive landscape

| Player | Technology | Status as of 2026 |

|---|---|---|

| NVIDIA + ecosystem | MCCP on Rubin / Rubin Ultra; 45 °C inlet water | Volume ramp targeted for H2 2026 |

| TSMC | IMC-Si / Direct-to-Silicon on CoWoS-R | Demonstrated on 3.3X-reticle package, >2.6 kW TDP |

| Microsoft | In-chip microfluidics in server pilot | Lab-scale, up to 3× cold-plate performance |

| Mikros Technologies / Jabil | Direct-die MCCP “chip-to-chiller” | Productized for NVIDIA AI systems |

| xMEMS | µCooling MEMS-based micro-cooler | Showcased at TSMC 2026 NA Symposium |

| Supermicro / Vertiv / AVC / Auras | Rack-scale DLC + MCCP integration | Shipping with GB200/GB300; Rubin in design-in |

Sources for the table above are referenced inline throughout this article.

Where the market gap sits. Every major vendor has demonstrated a viable MCCP, but high-volume manufacturing of integrated (in-die or bonded) micro-channel coolers is still constrained by TSV density trade-offs, defect rates, and qualification of new TIM-less interfaces — all flagged explicitly by IDTechEx in its 2026–2036 advanced-packaging thermal forecast.

FAQ — voice & AI-search optimized

Is micro-channel cooling the same as direct liquid cooling?

Not exactly. Direct liquid cooling (DLC) is the broader category — any system where coolant reaches a cold plate on or near the chip. Micro-channel cooling is a subset in which the cold plate, or the chip substrate itself, contains channels with hydraulic diameters under roughly 200 µm.

Why can’t we just keep using bigger cold plates?

Above roughly 1.5 kW per package, the thermal interface material (TIM) layers between die, lid, and cold plate dominate total resistance. Adding fin area no longer helps because heat cannot cross those interfaces fast enough.

Does micro-channel cooling work with hotter facility water?

Yes — and that is one of its biggest economic arguments. NVIDIA’s next-generation systems target 45 °C inlet water, which substantially reduces or eliminates mechanical chiller load.

What about pressure drop and pumping power?

Published IMC-Si results show a 2 kW silicon die cooled with under 10 W of pumping power, meaning the cooling system itself adds well under 1% parasitic load.

Will this technology trickle down to consumer GPUs?

Direct-die MCCPs are already mainstream in enthusiast water-cooling, and concepts like xMEMS µCooling are being targeted at thinner consumer devices. Full IMC-Si is unlikely to reach consumer silicon before the late 2020s.

Editor’s take

The transition from lid-mounted cold plates to micro-channel cooling embedded in the chip substrate is the most consequential thermal-engineering shift since heat pipes entered notebooks. The peer-reviewed efficiency numbers, the named partnerships (NVIDIA, TSMC, Microsoft, Mikros, Vertiv), and the public TDP roadmap to 2,300 W all point in the same direction.

发表回复

要发表评论,您必须先登录。